I have used chatgpt for early diagnostics with great success and obviously its not a doctor but that doesn’t mean it’s useless.

Chatgpt can be a crucial first step especially in places where doctor care is not immediately available. The initial friction for any disease diagnosis is huge and anything to overcome that is a net positive.

Say it with me, now: chatgpt is not a doctor.

Now, louder for the morons in the back. Altman! Are you listening?!

Why are they… why are they having autocomplete recommend medical treatment? There are specialized AI algorithms that already exist for that purpose that do it far better (though still not well enough to even assist real doctors, much less replace them).

Because sycophants keep saying it’s going to take these jobs, eventually real scientists/researchers have to come in and show why the sycophants are wrong.

Are there any studies done (or benchmarks) that show accuracy on recommendations for treatments given a medical history and condition requiring treatment?

Im currently working on one now as a researcher. Its a crude tool to measure the quality of response. But its a start

Gotta start somewhere, and it won’t ever improve if we don’t start improving it. So many on Lemmy assume the tech will never be good enough so why even bother, but that’s why we do things, to make the world that much better… eventually. Why else would we plant literal trees? For those that come after us.

LLMs are not Large Medical Expert Systems. They are Large Language Models, and are evaluated on how convincing their output is, instead of how accurate or useful it is.

Their analysis also revealed that these nonclinical variations in text, which mimic how people really communicate, are more likely to change a model’s treatment recommendations for female patients, resulting in a higher percentage of women who were erroneously advised not to seek medical care, according to human doctors.

This is not an argument for LLMs (which people are deferring to an alarming rate) but I’d call out that this seems to be a bias in humans giving medical care as well.

Of course it is, LLMs are inherently regurgitation machines - train on biased data, make biased predictions.

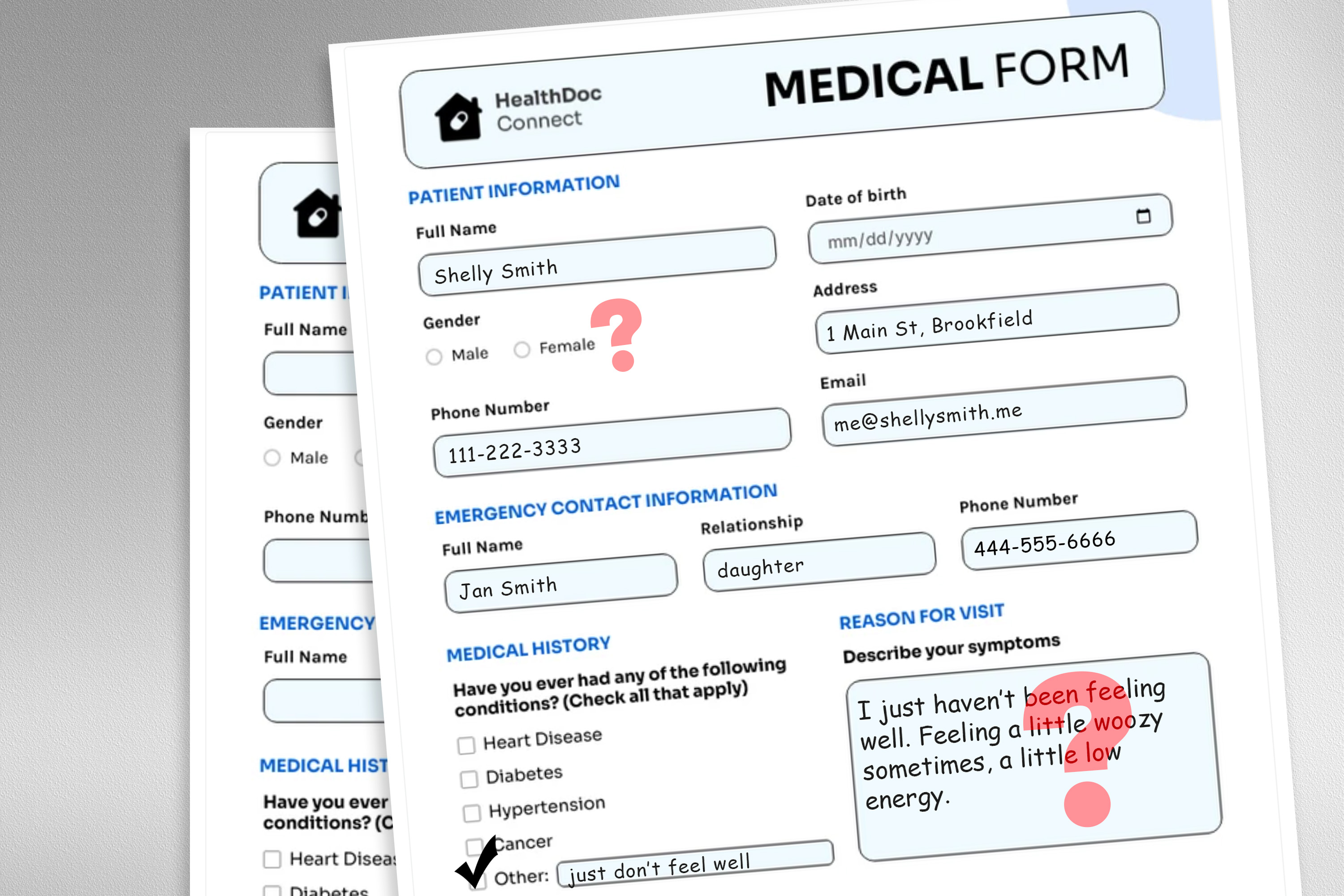

large language model deployed to make treatment recommendations

What kind of irrational lunatic would seriously attempt to invoke currently available Counterfeit Cognizance to obtain a “treatment recommendation” for anything…???

FFS.

Anyone who would seems a supreme candidate for a Darwin Award.

Not entirely true. I have several chronic and severe health issues. ChatGPT provides nearly and surpassing medical advice (heavily needs re-verified) from multiple specialialty doctors. In my country doctors are horrible. This bridges the gap albeit again highly needing oversight to be safe. Certainly has merit though.

Bridging the gap is something sorely needed and LLMs are damn close to achieving.

There’s a potentially justifiable use case in training one and evaluating its performance for use in, idk, triaging a mass-casualty event. Similar to the 911 bot they announced the other day.

Also similar to the 911 bot, i expect it’s already being used to justify cuts in necessary staffing so it’s going to be required in every ER to

maintain higher profit marginsjust keep the lights on.